Last Wednesday and Thursday, Swiss based supply chain community had an opportunity to enjoy the 6th edition of the Swiss Supply Chain & Logistics Conference hosted by MaxComm Communication team and conference Chair, Taoufik ARIF 🌿.

During one of those days there was interesting panel conversation moderated by Thomas Heynen from Adnovum, Claire Fallen from adidas, Varsha Asarpota from Ascensia Diabetes Care, Cedric Spineux from Clariant and Marc Weissenfeld from Bayer discussing about Advancing Supply Chain Visibility into Intelligent Decisions.

The room was full of practitioners - 100+ operators, technologists, procurement leaders - people who actually run things. Not a conference audience looking for inspiration. People looking for answers they can take back to work on Monday.

Twenty minutes in, Frank from the audience asked the question that always comes up in any relevant conversation about Agentic AI - here is a picture:

Are any of you getting hard ROI such as a reduction in headcount as a direct result of AI implementation?

Nine upvotes. The room leaned in.

It is a completely understandable question. And in my humble opinion - it is almost entirely the wrong one.

What followed was a conversation that the panel - and the audience - clearly needed to have. Not about AI technology. About measurement.

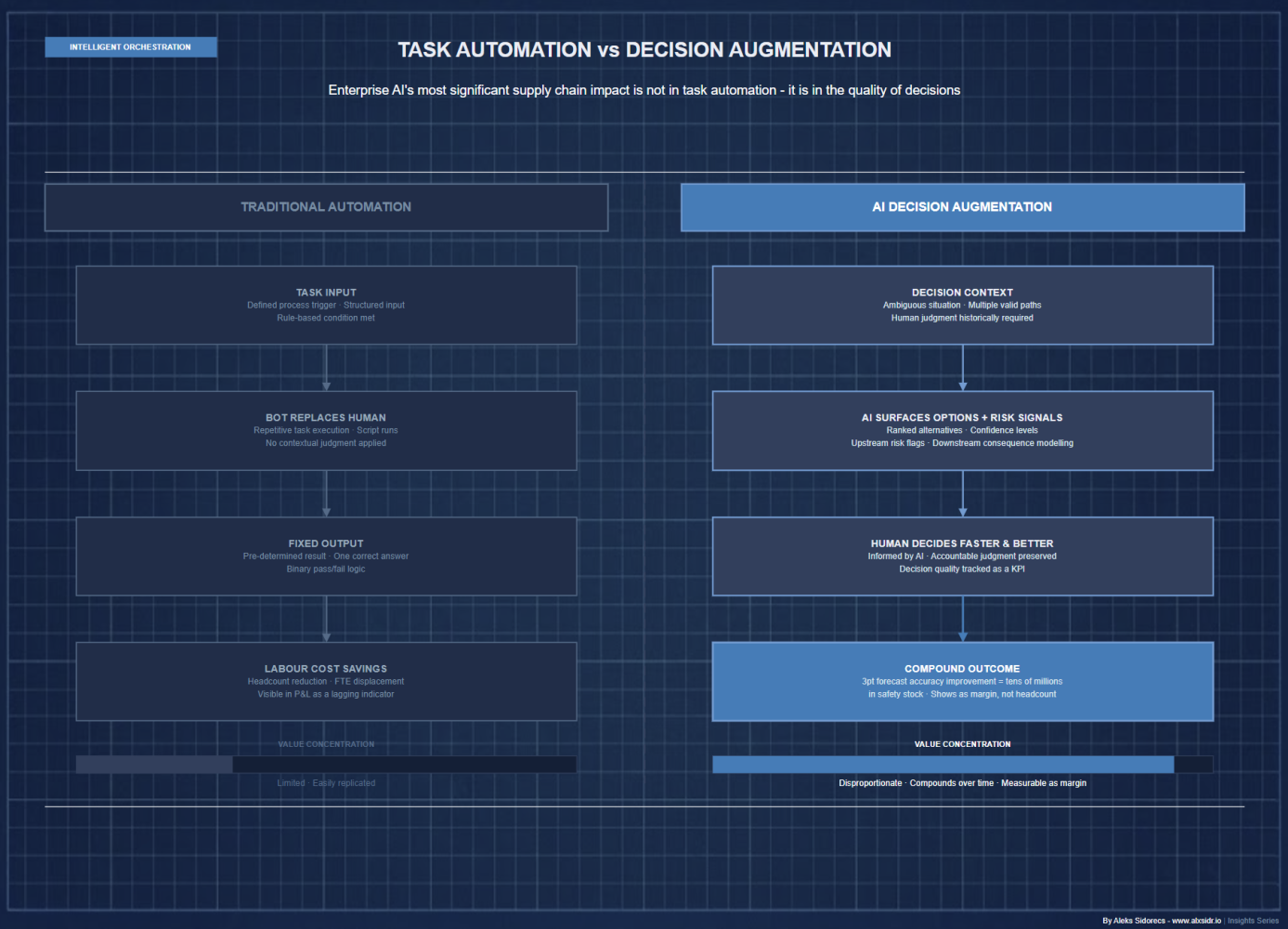

Why Hard ROI Is the Wrong Frame

The headcount question reveals something important about where most organisations are in their AI journey - not about the technology itself.

When finance asks for headcount reduction as the primary evidence of AI value, it is reaching for the most legible metric it already knows how to handle. Headcount is visible. It appears in the P&L. It has a clear before-and-after. It is, in the language of operations, a lagging indicator that everyone in the room can point to.

The problem is structural. The most significant value that AI delivers in supply chain and operations is not task automation - it is decision augmentation. And decision quality does not show up cleanly in headcount numbers.

A 3-percentage-point improvement in demand forecast accuracy across a 500 million euro product portfolio is worth tens of millions in reduced safety stock, fewer stockouts, and improved service levels. That value is real. It is measurable. But it does not show up as a reduction in headcount. It shows up as margin - slowly, consistently, compounding over time.

The organisations asking the headcount question are looking for AI to behave like a previous generation of automation technology. It does not. And if that is the primary measurement lens, most AI deployments will fail - not because they did not deliver value, but because the value they delivered was never instrumented correctly.

The Measurement Gap Is the Real Problem

Here is the number that should concern every operations leader in that room:

According to Forbes Research's survey, 39% cite measuring ROI and business impact as a top challenge - and fewer than 1% have achieved significant ROI from AI.

In 2025, the share of AI use cases reaching full production doubled year-on-year, according to ISG (Information Services Group) State of Enterprise AI report. Worker access to AI rose 50% in the same period, with the number of companies running 40% or more of their AI projects in production set to double again within six months, according to Deloitte 2026 State of AI survey of over 3,000 senior leaders. The deployment curve has clearly crossed the adoption threshold. The measurement curve has not kept pace.

This is not a technology failure. It is a measurement architecture failure.

When an AI system simultaneously touches a procurement workflow, a customer escalation queue, a logistics routing decision, and a compliance review, the attribution problem becomes genuinely complex. Which model produced which outcome? Which human decision was augmented versus replaced? Which efficiency gain would have occurred through process improvement alone?

Standard ROI frameworks were built for a world where you could draw a clean line between an intervention and an outcome. AI deployments - particularly the multi-agent, agentic architectures now entering production - do not behave that way.

The result is measurement paralysis: organisations that cannot prove value, cannot justify continued investment, and quietly let transformation programmes stall.

But there is an upstream problem that makes measurement paralysis worse. Before the measurement stack can function, there is a prerequisite that most organisations skip: data readiness. You cannot measure what you have not instrumented, and you cannot instrument what lives in spreadsheets, siloed systems, and undocumented tribal knowledge. The 95% of AI initiatives that failed to deliver sustained ROI in 2025 were not primarily failing because of model performance. They were failing because the data infrastructure required to measure - and therefore improve - AI performance simply did not exist. Fix the measurement architecture and you will still fail if the data underneath it is broken.

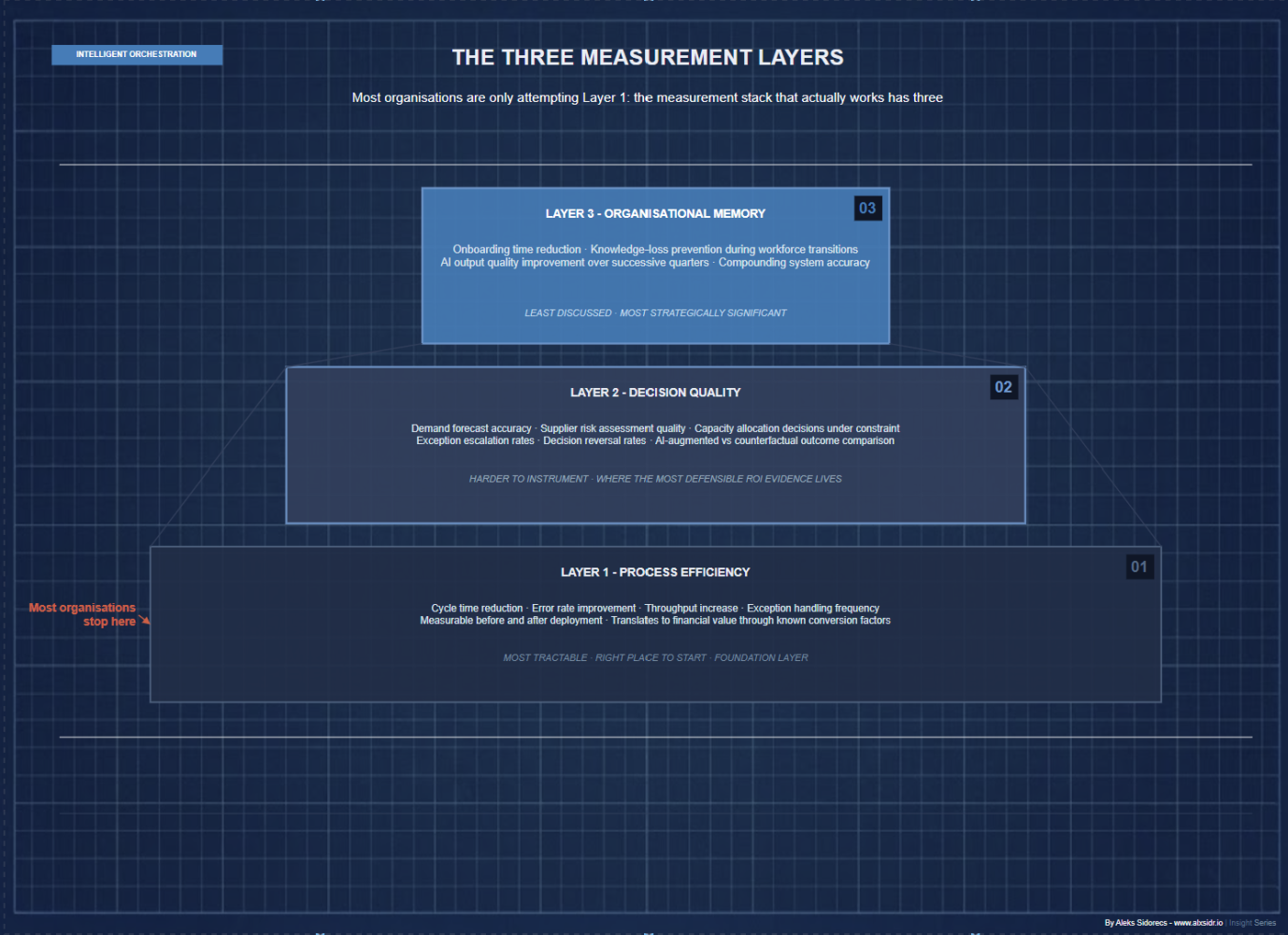

The Three Layers Most Organisations Are Missing

The measurement stack that actually works has three layers. Most organisations are only attempting one.

Layer 1 - Process-Level Efficiency Gains

This is the most tractable layer and the right place to start.

Process-level measurement captures cycle time reduction, error rate improvement, throughput increase, and exception handling frequency at the workflow level - before trying to aggregate those signals into a financial number.

A procurement team processing 40% more purchase orders with the same headcount. A logistics control tower resolving carrier exceptions in 18 minutes instead of 4 hours. A finance team closing month-end two days earlier.

These are operational metrics, not financial ones - but they are the foundation. They are measurable before and after deployment, attributable to specific process changes, and translate into financial value through conversion factors that finance teams already use.

The mistake most organisations make is skipping this layer entirely and reaching directly for P&L impact - which requires assumptions that neither operations nor finance can defend under scrutiny.

Layer 2 - Decision Quality Improvement

This layer is harder to instrument but arguably more valuable.

Enterprise AI's most significant supply chain impact is not in task automation. It is in the quality of decisions: demand forecasts, supplier risk assessments, capacity allocations under constraint. These decisions happen constantly, they compound over time, and their quality has enormous financial consequences that traditional measurement frameworks were never designed to capture.

Decision quality measurement requires establishing baselines before deployment - forecast accuracy rates, exception escalation rates, decision reversal rates - and tracking them systematically after. It requires treating AI-augmented decisions as a distinct population and comparing outcomes against the counterfactual.

This is operationally intensive. But it is the layer where the most defensible ROI evidence lives. And it is the layer that answers Frank's question more honestly than headcount ever could.

What made this panel genuinely interesting was that each company represented a different point on that measurement maturity curve. Some were still building the baseline. Others had decision-quality data but could not connect it to financial outcomes. None - including me - claimed to have the full stack working. That honesty was more useful than any polished case study!

Layer 3 - Organisational Memory Value

This is the least discussed and the most strategically significant layer - and the one that keeps me up at night.

Every interaction with an enterprise AI system, every correction, every escalation, every human override, is a data point that makes the system more accurate and more contextually aware over time. Organisations that capture this are building an asset that compounds. Organisations that ignore it are systematically underreporting the value of everything they have already built.

The relevant proxies are real and measurable: reduction in onboarding time for new operational staff, decrease in knowledge-loss incidents during workforce transitions, improvement in AI output quality over successive quarters.

In the agentic AI context specifically - where systems are making autonomous micro-decisions across logistics, procurement, and operations simultaneously - the organisational memory layer is not optional. It is the infrastructure that makes the entire system improvable over time rather than static.

Back to Frank's Question

So: are organisations getting hard ROI from AI?

Yes. But not primarily through headcount reduction - and the organisations chasing that metric are measuring the wrong thing.

The final shift required is moving from single-initiative ROI to a portfolio view of AI value. Measuring each deployment in isolation - this agent saved X hours, that model reduced Y errors - misses the compound effect. The organisations getting this right are tracking how initial process efficiencies create downstream outcomes: better cash management from faster financial close, improved resilience from better supplier visibility, reduced onboarding costs from accumulated organisational memory. The value compounds. The measurement framework needs to compound with it.

The organisations navigating this well are not the ones with the most sophisticated AI. They are the ones that treated measurement as a first-class deliverable from day one. They instrumented processes before deployment, connected governance logs to KPI frameworks, and built organisational memory as infrastructure rather than an optional enhancement.

Beyond automation, into orchestration. Beyond deployment, into accountability. Finance does not need AI to be perfect. Finance needs AI to be legible. That is where the real operational discipline lives. And it is where the leaders who get this right will separate from the ones still explaining to their CFO why the number is hard to calculate.

Frank's question will keep getting asked - at every supply chain conference, in every board review, in every investment committee meeting where AI is on the agenda. The practitioners in that room at the Swiss Supply Chain Forum knew it. The honest ones said so.

But the measurement stack described here only works if the operating model underneath it is designed to support it. It was covered in previous issue of Intelligent Orchestration.