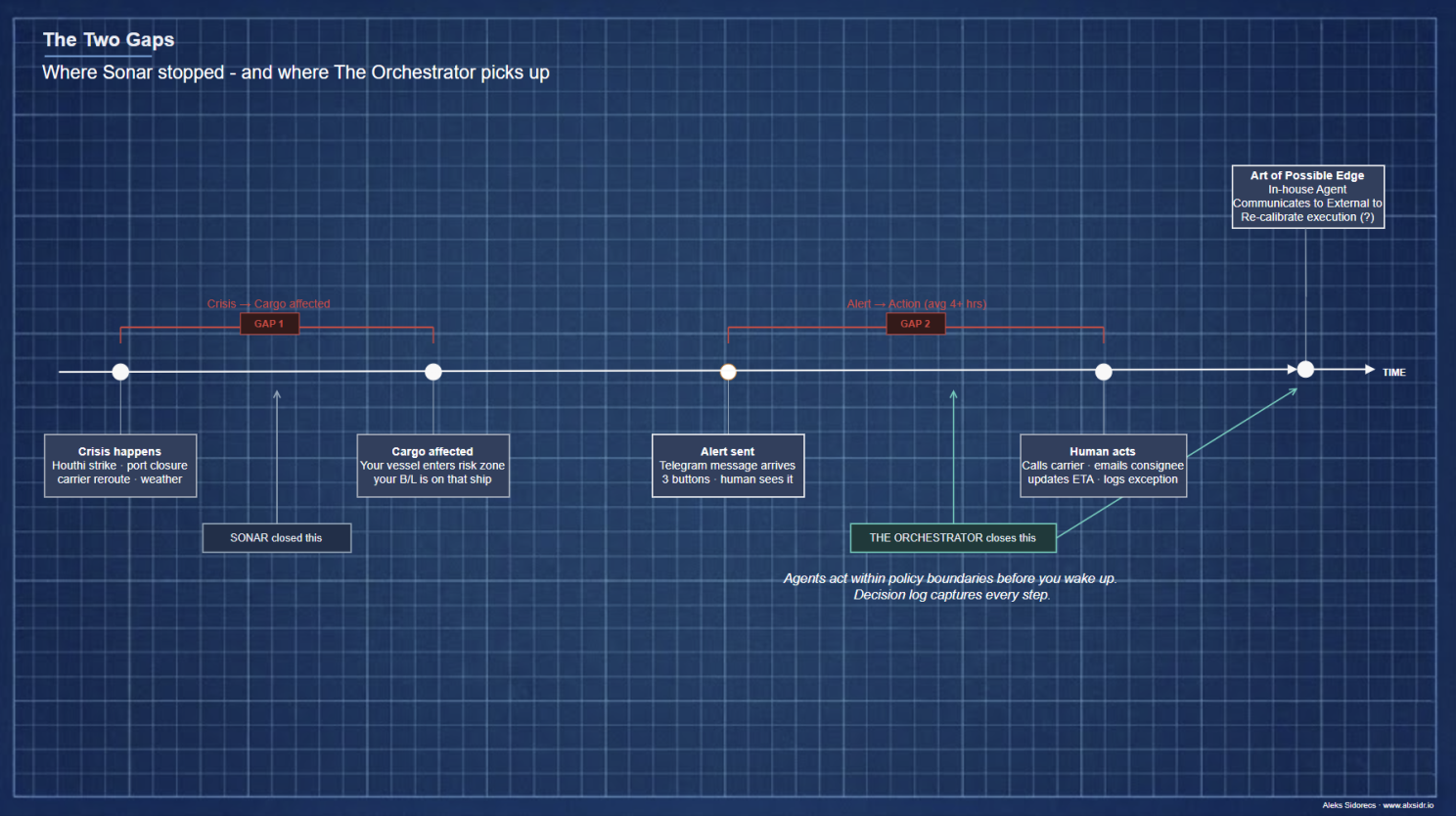

Sonar watched vessels. It detected risk. It sent a Telegram alert with three buttons and waited. That waiting is a gap - the second gap, the one Build Log #1 didn't close. But before I tell you what The Orchestrator does about it, I need to tell you what broke, what the Anthropic data confirms, and why the language the industry uses for this problem is pointing at the wrong thing.

MSC IRINA is still on the watchlist.

Three containers. Two bills of lading. Gulf of Aden. Sonar watches the vessel and sends an alert when the risk score crosses the threshold. Severity emoji. Recommended action. Three buttons.

Then it waits for a human to click a button. I wrote that line in Build Log #1 as if the waiting were fine. It's been bothering me since.

The waiting is not fine. It is the problem. The alert arrives at 03:14. The human sees it at 07:30. Four hours and sixteen minutes in which the carrier could have been contacted, the ETA revised, the consignee notified, the exception logged. Four hours where Sonar - for all its sophistication - is doing exactly what a spreadsheet would do. Sitting there. Waiting.

Sonar solved the first gap. The time between a crisis happening and your cargo being affected. That gap cost operations teams real money during the Red Sea disruptions. Sonar closes it. But there is a second gap. The time between knowing and acting. And that one I hadn't touched.

The Control Tower experiment - and what broke it

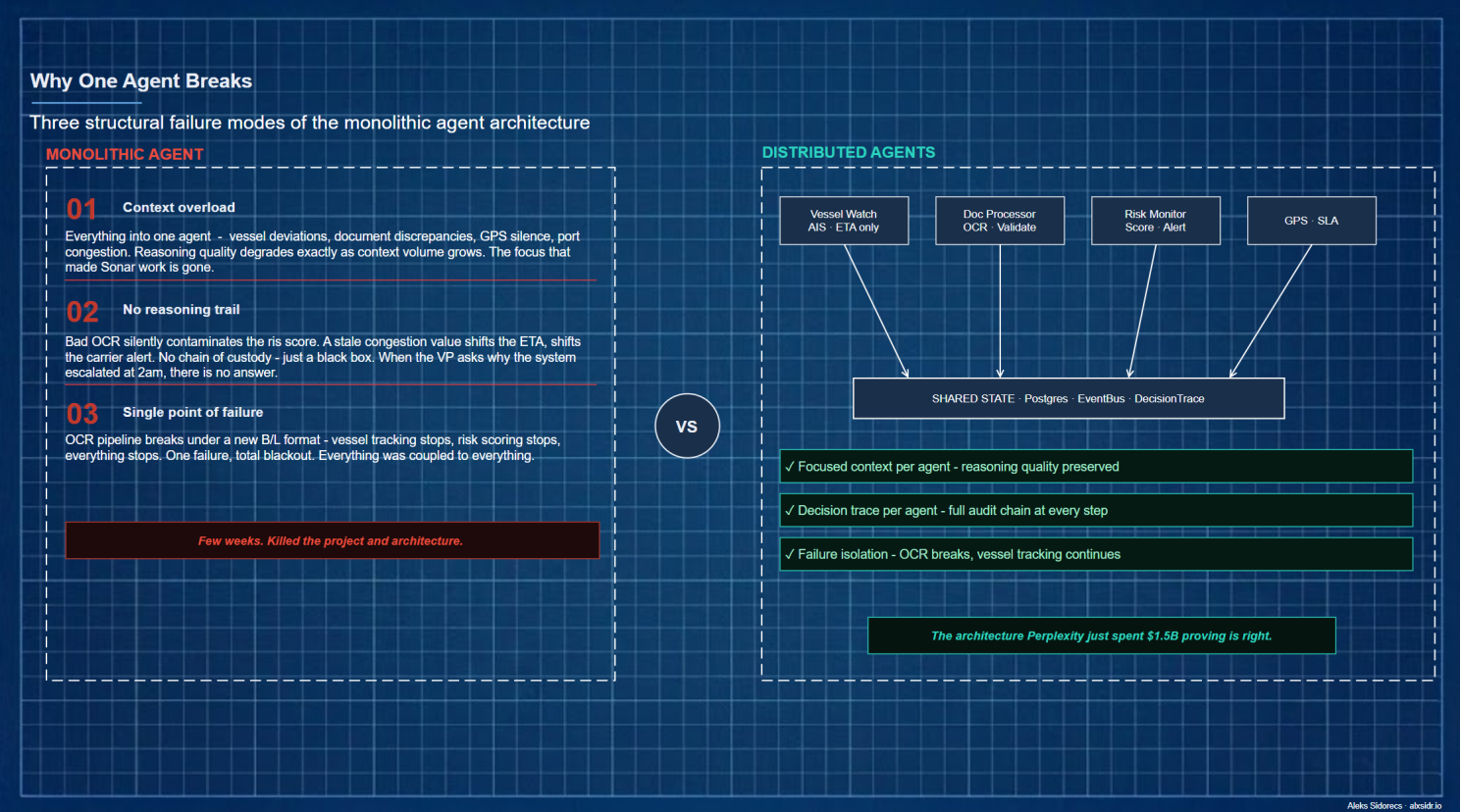

After Sonar, the next step felt obvious: expand. One intelligent agent at the centre - watching vessel positions, reading documents, tracking drivers, scoring risk, calling carriers. Everything wired together. Build the Control Tower the whole enterprise logistics industry promises.

So I built it. Or started to.

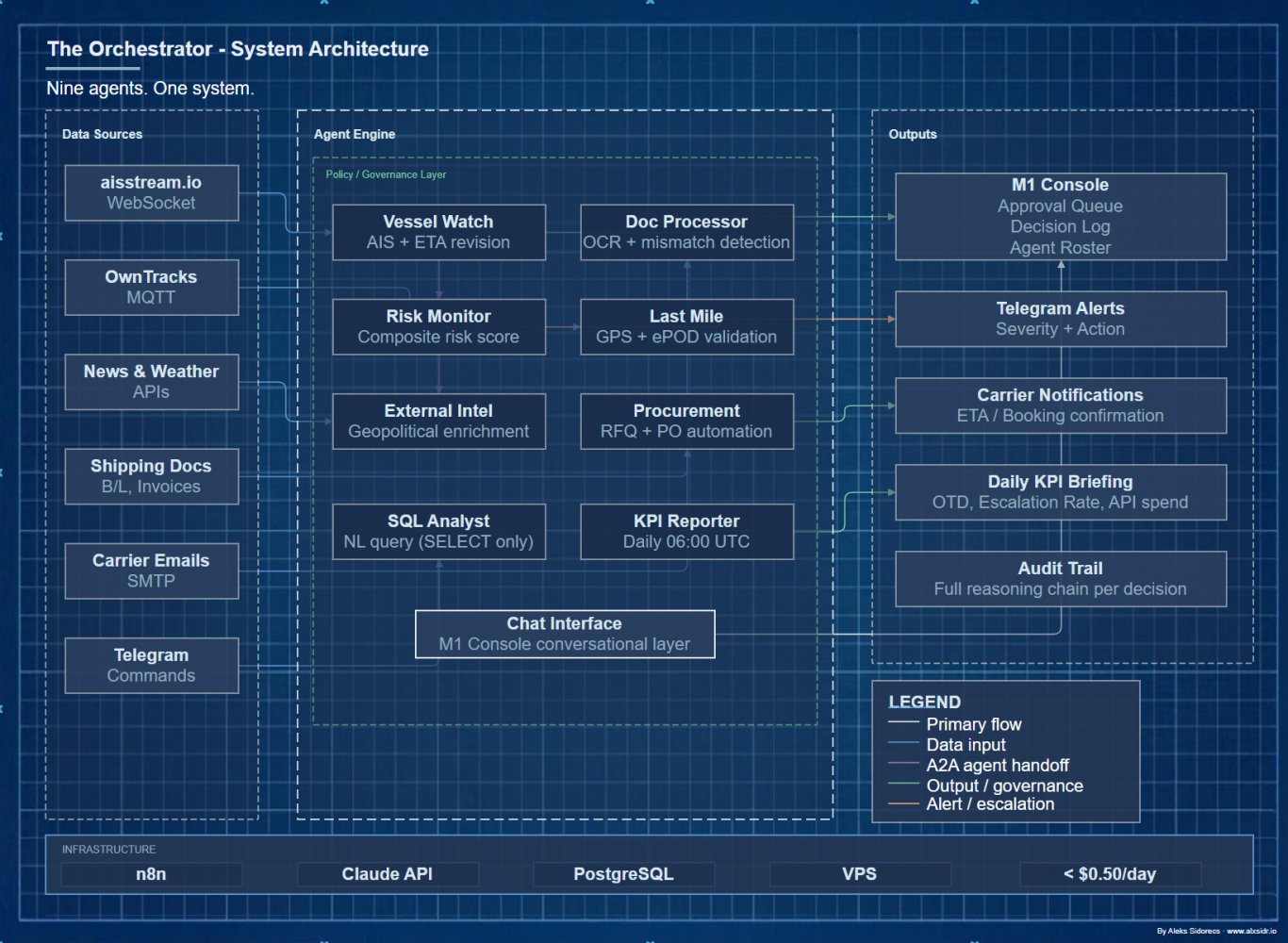

The design was clean on paper: one orchestrator agent receiving all inputs - AIS signals, carrier emails, customs events, weather feeds, freight rate changes - reasoning across all of them, deciding what mattered, and sending the right alert to the right person.

It failed. Not dramatically. Gradually and uselessly. Three failure modes, all of them predictable in hindsight to anyone who has run operations at scale.

Context overload. When you give one agent the responsibility of reasoning about a vessel deviation and a document discrepancy and a driver's GPS silence and a port congestion spike - all at once - it doesn't get smarter. It gets confused. Reasoning quality degraded exactly as context volume grew. I poured in the full operational picture and got hedged, generic outputs that couldn't be acted on.

Sonar's Analyst agent worked precisely because it only answered one question: what is the actual threat in this specific region right now? Strip away that focus and you strip away the usefulness.

The missing reasoning trail. When one agent did everything, failures were invisible. A bad OCR extraction silently contaminated the risk score. A stale congestion value influenced an ETA calculation that influenced a carrier alert. There was no chain of custody - just a black box that had processed something and emitted an answer. In operations you cannot have that. When the VP asks why the system escalated to backup carrier at 2pm, you need to show the chain, step by step. I had no chain.

Single point of failure. When the document processing logic broke under a new B/L format, it brought everything down. Vessel tracking stopped. Risk scoring stopped. Everything was coupled to everything. One agent doing everything is one point of failure with no isolation.

I killed the project in the middle of development. So, starting from the scratch now again.

The industry just confirmed it - twice

The same week I scrapped the single-agent architecture, two things appeared that crystallised exactly why it was structurally wrong.

The first was AgentField. Their framing is direct: AI has outgrown frameworks and is moving from chatbots into backends - making decisions, not just answering questions. These agents need infrastructure, not prompt wrappers.

Their core architectural argument: distributed microservices beat monolithic scripts. Not marginally - architecturally. One agent doing everything creates the exact failure modes I'd found: no isolation, no traceability, no independent scaling.

The second was Perplexity Computer. At its core, Computer functions as a general-purpose digital worker - a system that accepts a high-level objective, decomposes it into subtasks, and delegates each subtask to whichever model is best suited. Nineteen specialised models, not one. Their own data showed that no single model commanded more than 25% of enterprise usage - because different tasks need different specialists.

The monolithic agent is not the destination. It's the stepping stone. What neither AgentField nor Perplexity provides is what actually matters for supply chain: domain knowledge encoded as governance. Perplexity Computer will orchestrate nineteen models to plan your Japan trip. It has no idea what a normal transit deviation looks like for cocoa out of Abidjan versus coffee out of Santos. It can't tell you whether the delta between MSCU7829341 and MSCU7829342 is an OCR error or a shipper mistake - and which one requires holding the shipment.

That knowledge doesn't come from a better model. It comes from twenty years on the floor. The Orchestrator is where that knowledge goes.

?! "Control Tower" is already the wrong term

Before I describe what The Orchestrator is, I want to challenge the language the whole industry uses - including language I am still using myself.

Control Tower is a visibility metaphor. An elevated position. Watching a field of operations. Seeing what others cannot. Communicating what is observed. The value is in the seeing and the telling.

That was a useful frame when the problem was genuinely information asymmetry - operations teams flying blind, no real-time data, dashboards built on stale exports. A Control Tower that gave you live vessel positions and carrier ETAs was a genuine step change.

But the information asymmetry problem is largely solved. The new problem is response latency - the gap between knowing and acting. And a Control Tower, by its very name and design logic, is built to see, not to act. When you build an agentic system inside a Control Tower mental model, you end up with exactly what I built: a sophisticated alert system that waits for a human to click a button.

What replaces it is not a better Control Tower. It is an Agentic-Driven Operating Model. Not a tower you observe from. An operating model where agents are first-class actors - with defined authorities, explicit policy boundaries, full decision audit trails, and human governance layered around the autonomous core rather than sitting above it.

The vocabulary encodes the assumption. The assumption shapes the design. Key learnings I took from my TRIZ training and development programme in the past.

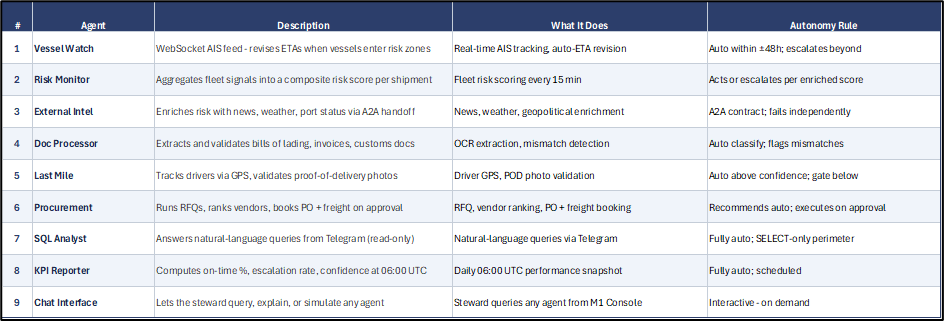

Nine agents. One system.

The Orchestrator is nine focused agents, each doing one thing well, communicating through shared state, governed by a policy layer the human controls. Same stack as Sonar - n8n, Claude, Postgres, Telegram, the same VPS - under fifty cents a day.

In this experiment I have decided to drop few features related to end to end supply chain re-balancing by changing supply and S&OP planning as it would require creating a lot of synthetic data points - what I will do later once the current architecture is finished, tested and deployed. Main reason is that it would require to create a new generation of algorithm, what will be a separate joy.

What OpenClaw taught me about tokens

I ran OpenClaw properly before building The Orchestrator - not as a demo but as a working agent on real tasks. In case you have already some experience as I do, the first thing you notice is how fast the API bill moves. Give it a complex objective and it chains reason-act-observe loops, feeding each iteration's history back into the next call's context. Hundreds of thousands of tokens per task is not hyperbole. By iteration five the model is reading four iterations of its own reasoning to produce the fifth.

The second thing you notice is that OpenClaw already has an answer to this. It does not inject all skills into every prompt - only the ones relevant to the current step. The framework's performance comes not from giving the model everything, but from giving it only what this specific call requires.

That is the design principle behind the token layer in The Orchestrator. Each agent receives a fixed, minimal payload - only the fields it needs, built by a dedicated context function. System prompts are held above the Anthropic caching threshold so they cost 90% less on repeat calls. Output is capped per agent and structured as JSON with bounded field lengths. Every call logs its cost to the database, so the morning report includes yesterday's API spend.

The difference between a mid-size company that can afford to run this and one that can't is not the technology. It is the discipline around context.

The governance moment

The M1 Orchestration Console is where the agent steward lives. I discussed about this concept and Agent Driven operating model in previous publication.

Three panels. The agent roster - what each agent is authorised to do and is doing right now. The decision log - every autonomous action with full reasoning, reviewable, searchable, quarriable. The approval queue - cases that crossed a policy threshold requiring sign-off, with the exact workflow resuming when you approve.

When you click Approve, it doesn't just send a message - it resumes the paused workflow from exactly the step it stopped at, via an n8n webhook token stored in the approval record. The agent was waiting. Now it continues. Exact resumption, no duplication.

The policy slider is the thing I keep coming back to. Drag it left and the agent asks permission. Drag it right and it acts. It is the autonomy boundary made physical. You are not configuring software. You are calibrating an operating model.

That last point is the direct answer to what broke in the Control Tower experiment. The monolithic design destroyed isolation. Each failure cascaded. Each agent's reasoning was invisible. The Orchestrator's architecture restores all three: isolation, traceability, focused context. The three failure modes that killed the Control Tower are the three design principles of what replaced it.

When the next alert fires at 03:14, the Vessel Watch agent revises the ETA. Risk Monitor scores the exposure. External Intel enriches it with a carrier advisory. The approval queue has a fully populated decision request - context, reasoning, recommendation - waiting when the steward opens the console at 07:30. The human reviews a decision already made, not a crisis still unfolding.

That is what the second gap looks like when it closes. Where it is leads us to? That is a reason I put a question mark in Diagram 1.

Stay tuned.

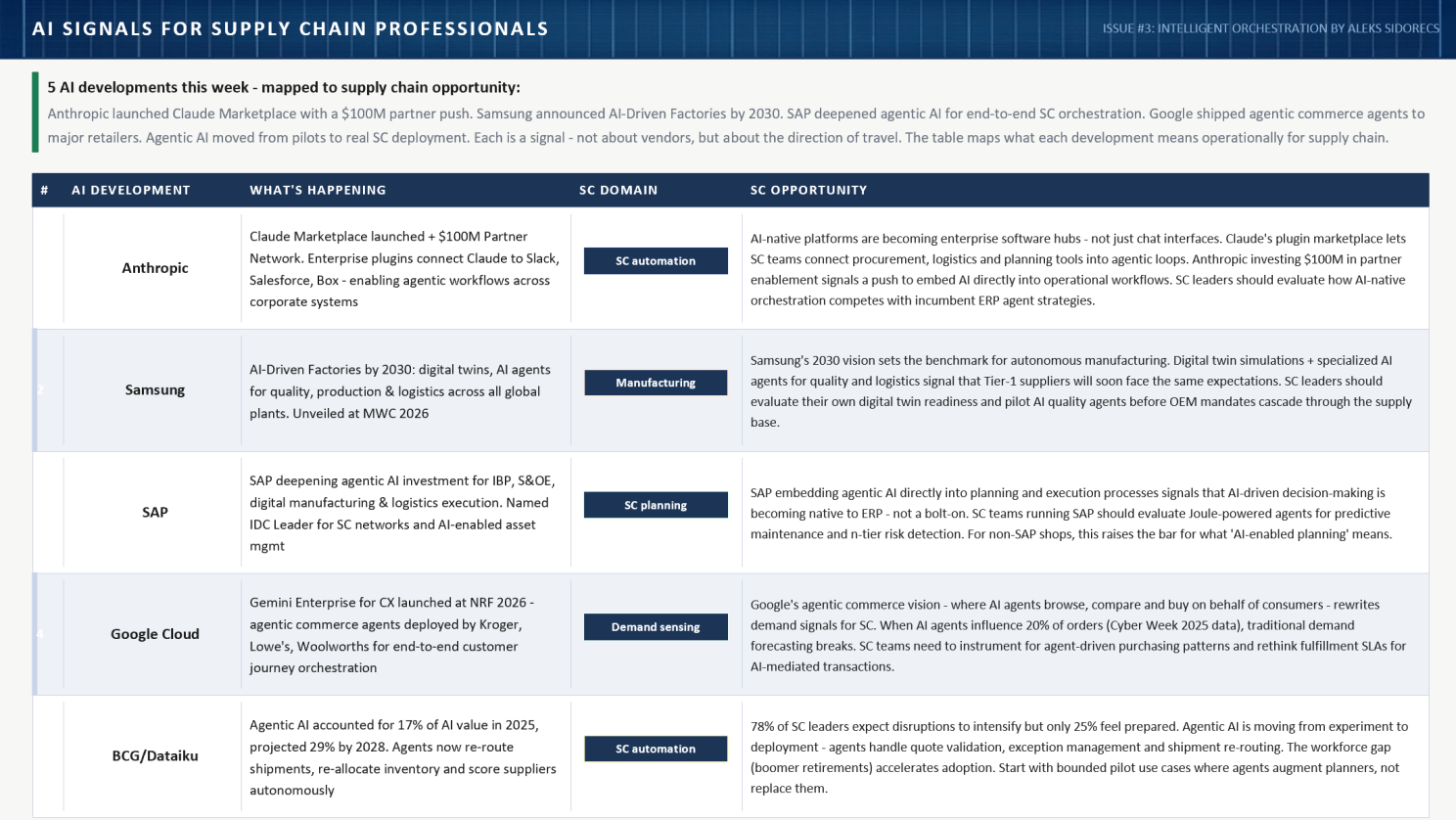

And some interesting news during past week. Especially I would like to highlight a very bold commitment from Samsung Electronics announced recently. As practitioner and applied researcher, I am certainly interested to connect with peers there to understand better the practicalities of such a roadmap.