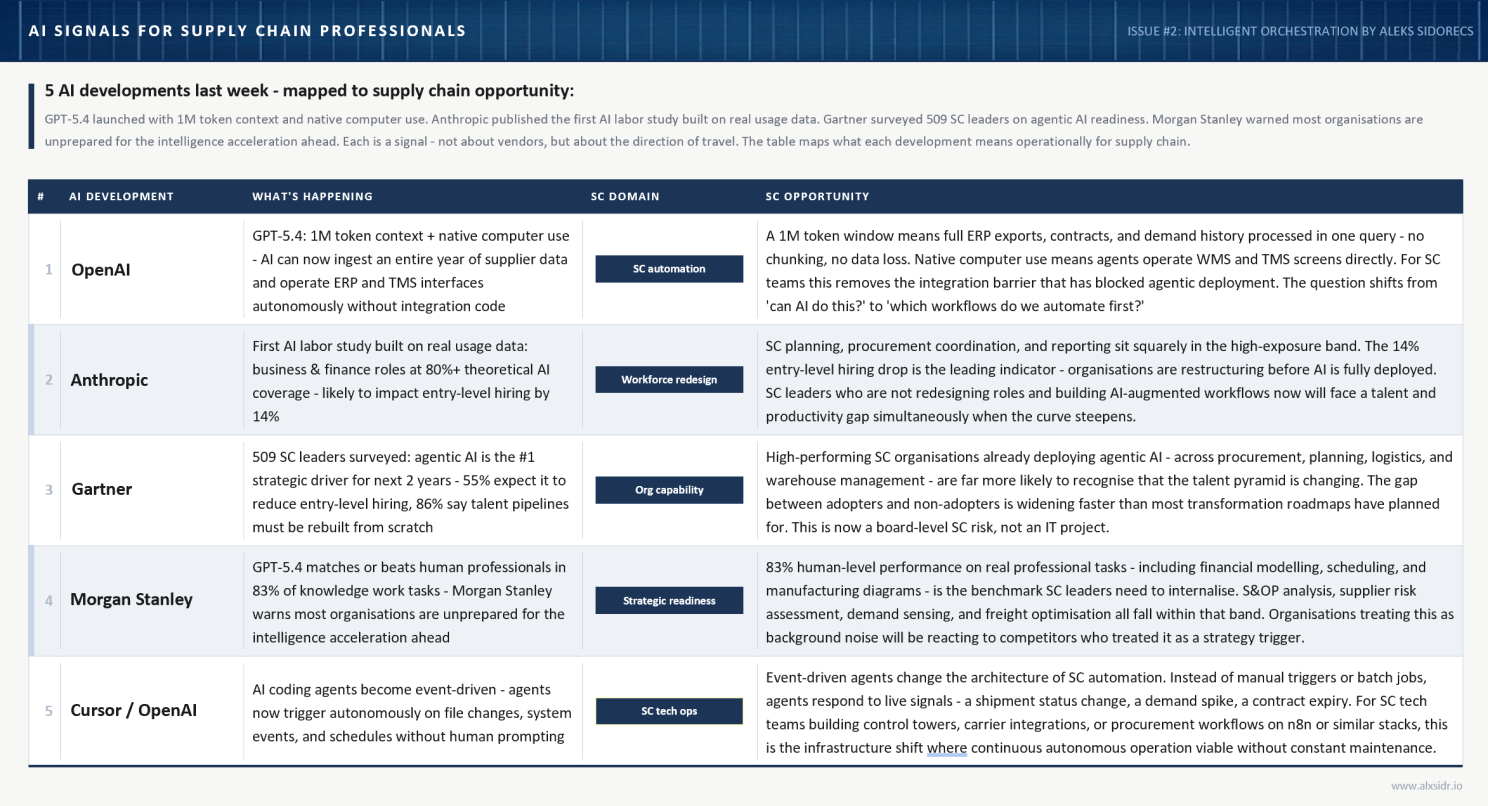

There were some interesting news, let me summarize them here before I deep dive into one particular that stands the most:

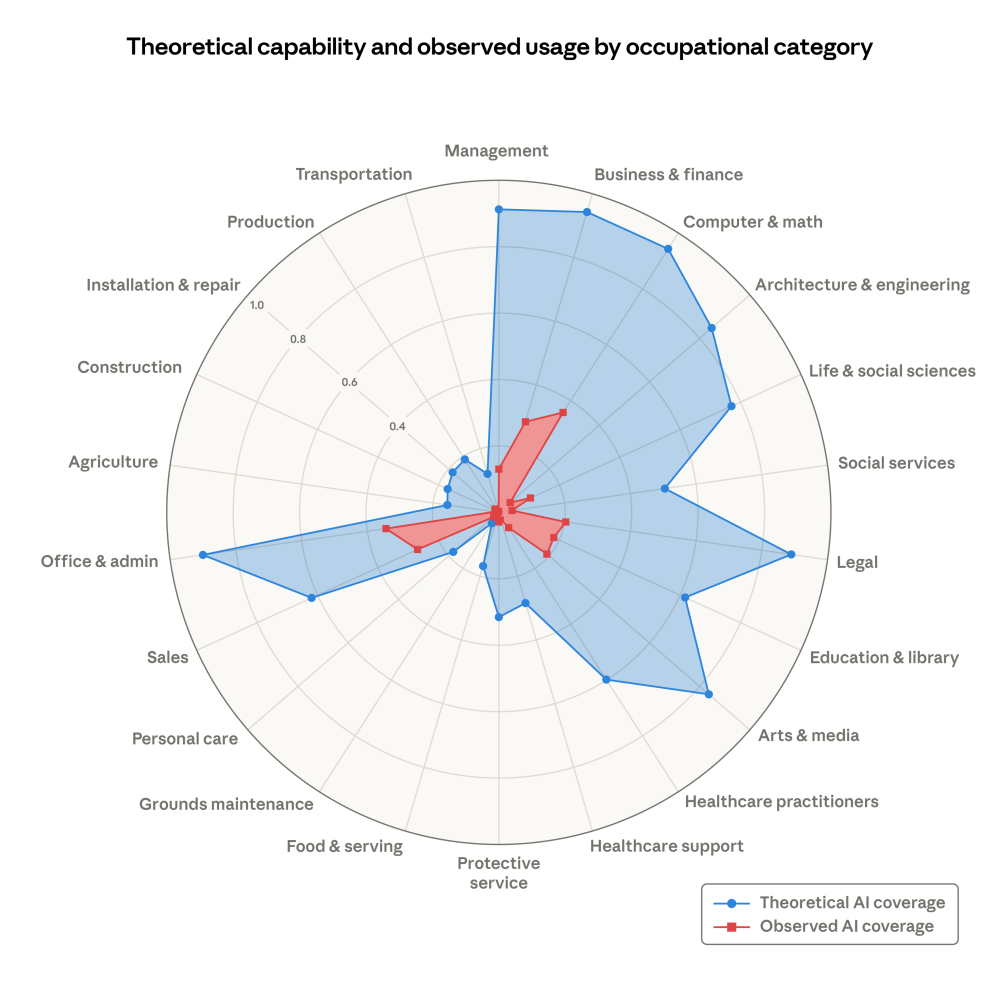

Now there is one that requires deeper reflection, so let me cover it here. On March 5th 2026, Anthropic dropped a research paper that most people read as a labour market study. Fair enough - it is one. But if you work in supply chain, there's a chart in it that tells a different story. A more uncomfortable one

The report, "Labour market impacts of AI: A new measure and early evidence"introduces a metric called observed exposure. It compares what LLMs could theoretically do across occupational categories with what people are actually using them for at work. Not what's possible. What's happening.

Three categories matter for us: Production, Transportation and Management. In all three, blue dwarfs red. The models can handle far more than organisations are letting them do.

First instinct: we're behind.

I'd push back on that. If adoption is low across the board, your competitors aren't materially ahead either. The chance to gain a structural advantage hasn't closed. It's barely been explored.

But that immediately raises the harder question. Why is the gap so large? And what would closing it actually require?

The Deployment Ceiling Is Not the Model

Anthropic's own methodology gives us a clue. They split AI use into two modes: augmentative (human uses Claude to work faster) and automated (Claude executes with minimal human involvement). Automated use gets weighted more heavily because it's more predictive of real displacement.

The finding: observed exposure remains a fraction of theoretical capability across every occupational category. Most organisations are still in augmentative mode. AI is helping people do their existing jobs slightly faster. It is not changing how the work gets structured.

The deployment ceiling is not the model. It's the organisation.

I've been chewing on this for a while, and the Anthropic data confirms something I keep coming back to: the bottleneck for agentic AI at scale is the operating model. Not the technology. Not the model weights. Not the API. The organisational container the technology gets poured into.

I've spent 20-plus years inside supply chain operating models - building them, inheriting broken ones, redesigning them post-acquisition. I helped integrate 80 countries during the LafargeHolcim merger. I've built greenfield functions from nothing at Olam and run turnaround programmes at Zeppelin and P&G while deploying cutting edge solutions during my consulting work at Julius & Clark. The pattern is always the same: the technology arrives before the organisation is ready to absorb it.

That's what the blue-red gap is showing us. Except this time, the technology isn't an ERP upgrade or a TMS rollout. It's an autonomous agent that can make decisions. And the operating model isn't even close to ready.

Why Supply Chain Is Stuck

If you've worked in this space, you'll recognise the forces that hold the red area back. They're not new. They're the same forces that slowed every technology adoption wave before this one - just sharper now because the stakes are higher.

Trust, risk and misaligned incentives. Operational teams resist an opaque algorithm overriding schedules during execution. And honestly? I get it. I've watched planners override perfectly good system recommendations because the system couldn't explain itself. Leaders worry about service breakdowns and unclear accountability - if the AI recommended it and it failed, whose problem is it? Meanwhile, ROI frameworks fixate on cost savings when the actual upside is in mitigating disruption, compressing cycle times and protecting service levels.

The highest-value work sits in the outliers. AI is excellent at handling volatility, disruption response, substitution logic, adaptive scheduling. But those scenarios are chaotic, steeped in institutional memory and resistant to being written down. Teams fall back on reactive problem-solving because it feels more controllable. Here's the thing: the situations where AI could deliver the most impact are exactly the ones where organisations are least willing to let it operate.

Fragmented data is the silent blocker. Most supply chains still run across disconnected ERP, warehouse and transport systems held together with spreadsheets and workarounds. Core reference data - products, sites, lead times - is riddled with inconsistencies. Without reliable inputs, AI stays in the lab. I've seen this pattern at every company I've worked in: the data conversation is the one nobody wants to fund until the system falls over.

This fragmentation isn't uniform. I've written before about supply chain asymmetries - the structural differences between symmetric, asymmetric and hybrid supply chain architectures. In symmetric chains, data flows are balanced and predictable enough that an agent can work with what's already there. In asymmetric chains - think agricultural commodities funnelling through millions of smallholder producers - data is scattered across informal networks and paper-based intermediaries. The blue-red gap doesn't just vary by occupation. It varies by supply chain architecture. And in the most complex, non-linear systems where AI could arguably add the most value, the data preconditions are hardest to meet.

None of these are model capability problems. They're process design problems. Governance problems. Accountability problems. Data architecture problems.

They're operating model problems.

The Structural Mismatch

An operating model is the blueprint for how an organisation delivers value - processes, people, technology, governance and the accountability relationships between them. Every element of that blueprint, as it exists in most enterprises today, assumes a human on the other end of each decision node.

Escalation paths route to a manager. Approval workflows need a named signatory. Accountability structures assign ownership to someone on the org chart. Performance metrics get reviewed quarterly by people who can be held responsible for the numbers.

Agentic AI doesn't fit any of those assumptions.

An agent doesn't appear on an org chart. It can't be held accountable in any conventional sense. It doesn't escalate because it feels uncertain - it escalates only if you've designed that behaviour into its architecture. And in a multi-agent system, where several specialised agents coordinate to complete a task, the question of who owns the outcome becomes genuinely hard to answer.

This is what the Anthropic chart is really showing us. Blue = what models can do. Red = what organisations have been willing to operationalise. The space between them isn't a technology gap. It's an operating model gap.

And it shows up in predictable ways: agents that complete tasks correctly but outside anyone's sanctioned authority, governance checkpoints that humans skip because the agent already acted, integration layers never designed for non-human actors making real-time decisions against live systems.

I've seen the same dynamic play out with every wave of supply chain automation. The technology works. The organisation doesn't know where to put it. The difference with agentic AI is that the stakes are higher - because the agent doesn't just provide information. It acts.

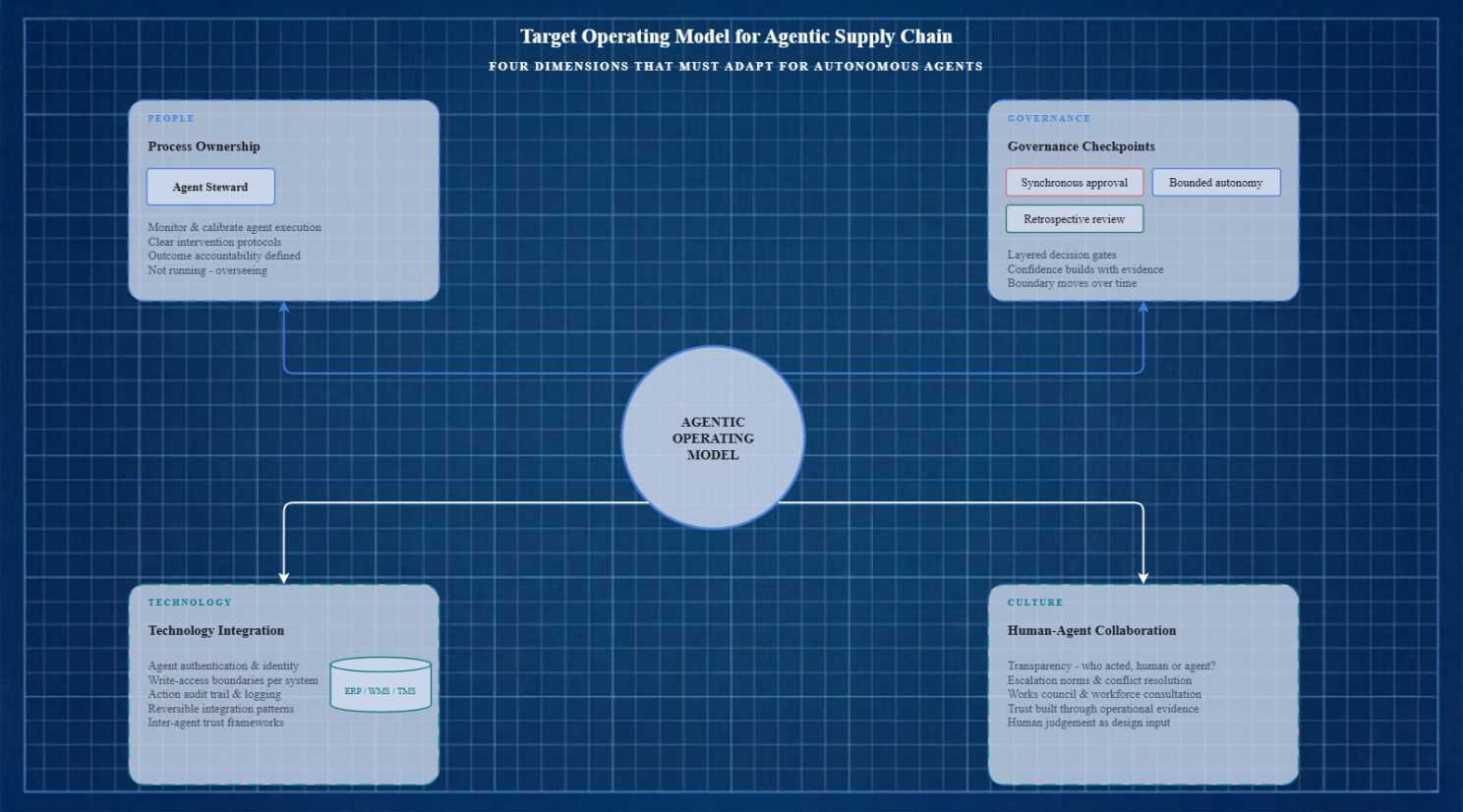

What Needs to Change in the Operating Model

The standard framework - people, process, technology, governance - is still the right structure. What changes is what you specify within each dimension once agents are in the picture. Not all four break at the same speed or in the same way.

Process Ownership

This is the first thing to break. Traditional process ownership assigns a human as accountable for end-to-end delivery. When an agent executes a significant chunk of that process autonomously, ownership goes fuzzy. The agent does the work. The human nominally owns it but may not see every step.

What's needed is a different kind of role: the agent steward. Not someone who runs the process, but someone who monitors, calibrates and intervenes in the agent's execution of it. That means equipping schedulers, coordinators and shift leads for this responsibility - not just data teams. It means revising procedures so people have clear protocols when AI surfaces a threat or proposes a reallocation. Who has authority. Who validates. Who reviews the outcome.

Most organisations haven't written this job description. They're going to need it soon.

Governance Checkpoints

Existing governance is built around decision gates - moments where a human reviews, approves or rejects before work proceeds. Agentic systems operate continuously and often faster than any human review cycle.

The answer isn't to strip out governance. It's to layer it differently.

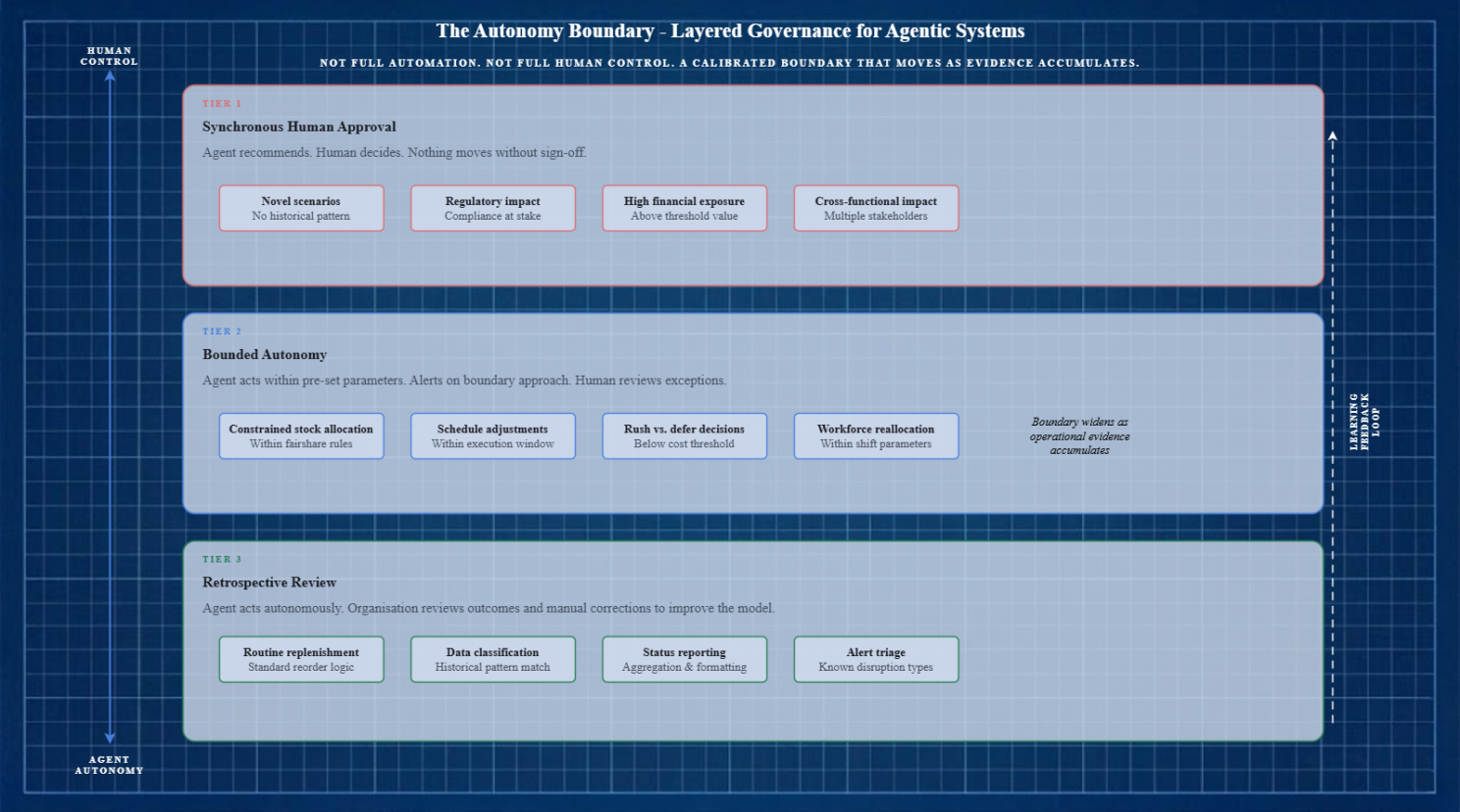

Some decisions need synchronous human approval. Others can proceed within pre-set boundaries - execution freezes during critical windows, sign-off mandated for consequential actions. A third category needs retrospective review rather than prior consent, with the organisation studying manual corrections to find where the model's logic falls short.

That layered approach is where genuine confidence gets built. Not full automation. Not full human control. A calibrated boundary that moves as evidence accumulates.

Technology Integration

Agentic systems don't just read data. They write to it. They call APIs, update databases, trigger downstream workflows. Multi-agent architectures add coordination complexity that single-agent setups avoid entirely: inter-agent security, trust frameworks, hidden failure modes in distributed systems.

An operating model that hasn't specified how agents authenticate, what systems they can write to and how their actions get logged is not a governance framework. It's a liability waiting to materialise.

And given the data fragmentation that already hampers most supply chains - the siloed platforms, the unreliable reference data - this is probably the hardest dimension to get right. I've never seen a supply chain where the data was clean enough to hand the keys to an autonomous agent without significant prep work first.

Human-Agent Collaboration Norms

How do teams know when an agent is acting versus when a human is? Who do people escalate to when the agent's output looks wrong? What happens when agent and human reach different conclusions?

This is the dimension most companies will underestimate. The technology integration is hard, but it's a solvable engineering problem. Getting people to trust and work alongside an autonomous agent in a high-stakes operational environment? That's a design challenge of a different kind.

Why the Target Operating Model (TOM) Is the Right Vehicle

There's a structural property of the Target Operating Model that makes it unusually well-suited to this: it's a living document by definition. A TOM isn't a one-off blueprint. It's a coordinated set of sub-models - processes, organisation, decision-making, technology, locations - all evolving as the organisation changes.

This matters because agentic AI doesn't have an end state. Capabilities are expanding fast. The boundary between what needs human judgement and what can be safely delegated will shift as models improve, as trust builds through operational track record and as regulatory frameworks settle. A static 18-month transformation roadmap with fixed milestones will be out of date before it's delivered.

The TOM absorbs these shifts without needing a full redesign each time. It's the artefact that makes continuous evolution legible across the organisation.

I've worked inside TOMs that were treated as static artefacts - produced once during a transformation programme, filed away, never updated. Those are worth nothing. A TOM that's actively maintained, with the agentic operating model as one of its evolving sub-models, becomes the connective tissue between strategy and execution. That's where the value sits.

Start with a Decision, Not a Platform

If the operating model is the bottleneck, the practical starting point isn't a platform evaluation or a vendor beauty parade. It's choosing a contained decision area and building the operating model scaffolding around it.

Identify one repetitive operational choice that already has data behind it: rush vs. defer, distribute constrained stock, reallocate workforce capacity. Draw on six to twelve months of historical performance for a baseline. Anchor to a measurable outcome: plan attainment, premium freight ratio, unit economics.

Within that contained scope, specify the autonomy boundary. What can the agent decide on its own? What requires human sign-off? What triggers escalation? Define the steward role. Build the layered governance. Establish the collaboration norms. Quantify the improvement.

That's not a pilot. It's the first module of an agentic operating model. Once it works, you widen the boundary. The TOM captures each expansion as it happens.

Three principles should guide the work.

Be explicit about the autonomy boundary - and make it revisable. Review it on a set cadence as confidence builds. An undefined autonomy boundary isn't freedom. It's ambiguity that will resolve itself at the worst possible moment.

Build reversibility into the architecture. Design agent integrations so they can be paused, rolled back or replaced without cascading failures. An agent that's become a single point of failure in a critical process is an organisational risk, regardless of how well it performs on a Tuesday.

Treat human judgement as a design input, not a fallback. The temptation is to define human involvement as what happens when the agent breaks. That's backwards. Human judgement should be deliberately preserved for decisions where context, relationships, ethical weight or regulatory accountability make human involvement genuinely valuable - not just technically necessary.

Where This Leaves Us

The Anthropic report gives supply chain the clearest empirical picture yet of something a lot of us have sensed: the technology is ready. The organisation is not.

That's not a failure. It's a design problem. And it's one that favours organisations willing to do the unglamorous operating model work - governance redesign, process ownership re-specification, collaboration norms - over those chasing the next model benchmark or waiting for the vendor ecosystem to solve it for them.

The opportunity window is still open. The winners won't be the first to deploy an agent. They'll be the first to build an operating model that lets the agent actually function inside a real organisation, with real accountability, real governance and real people who know what to do when the agent gets it wrong.

I keep coming back to the blue area on that Anthropic chart. It's not a threat. It's an open invitation. But accepting it means changing the container, not just what you pour into it.