I think I need to build a factory.

That was me this Friday evening, staring at a screen full of running containers and realising something had shifted. Not in what I was building - but in how.

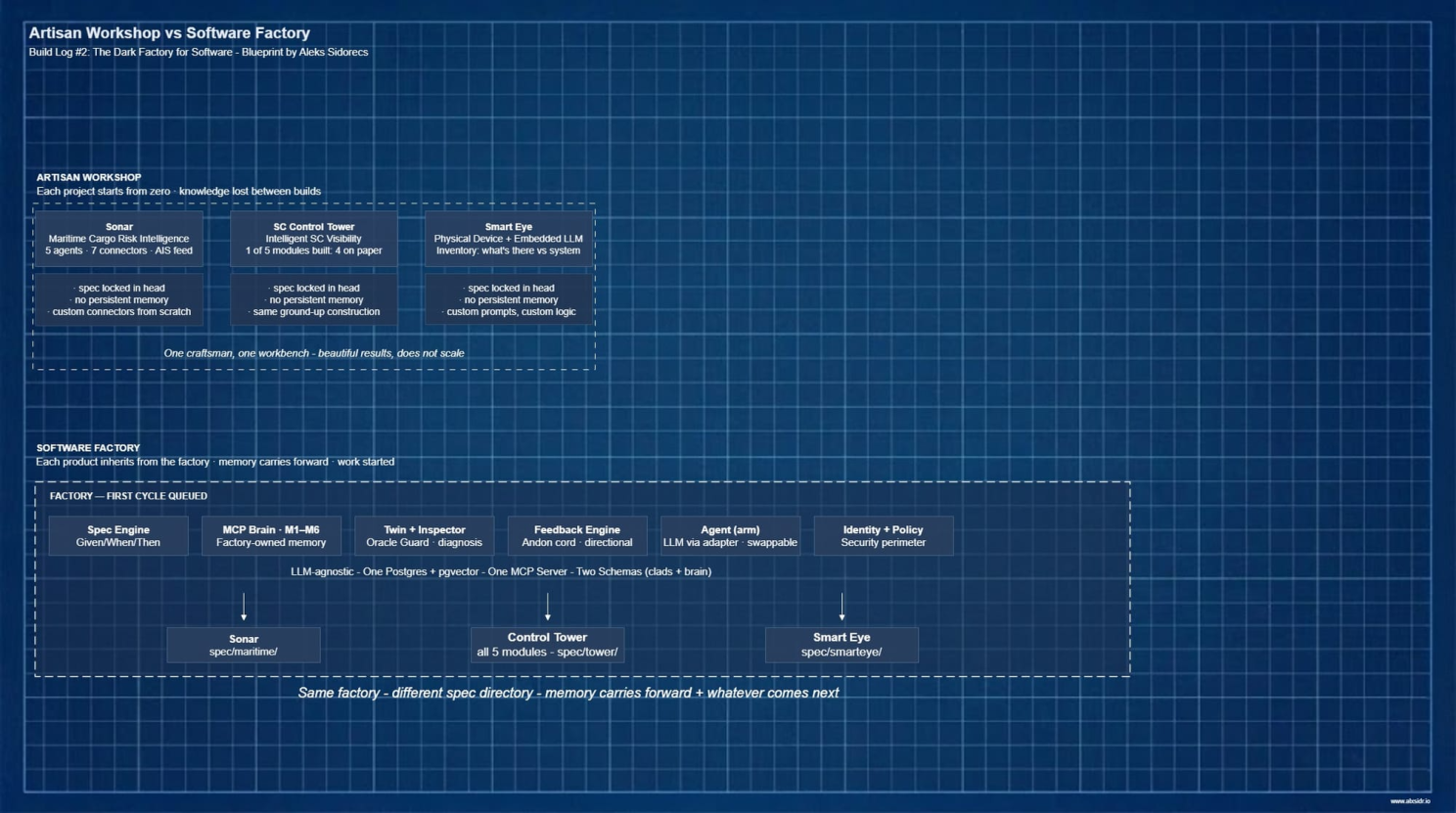

Three builds targeted in 6 weeks:

Sonar - a maritime intelligence system tracking cargo risk across the Red Sea, five AI agents, seven connectors, live AIS vessel data, geo-fencing, vector database, Telegram alerts, running on a €5/month server I've never visited.

Then an Supply Chain Control Tower - the AI-powered anomaly detection engine that watches shipment data and flags what a human would miss.

Then Smart Eye - a physical device concept with an embedded LLM that looks at what's on a warehouse shelf and tells you what's actually there versus what the system thinks is there. Still work in progress as faced several hardware level roadblocks.

Each project got more ambitious. Each one evolves and works. And each one started from absolute zero - different problem, same blank canvas, same artisan process of wiring things together by hand.

Here's what bothered me, sitting there on that Friday: the agents were the easy part. The connectors, the prompts, the workflow logic - that was plumbing. The hard part was everything around the agent. The specification of what normal looks like for a given trade lane. The logic for when an alert is worth sending versus when it's noise. The memory of what was tried last week and failed. The rules for what the system should never do unsupervised.

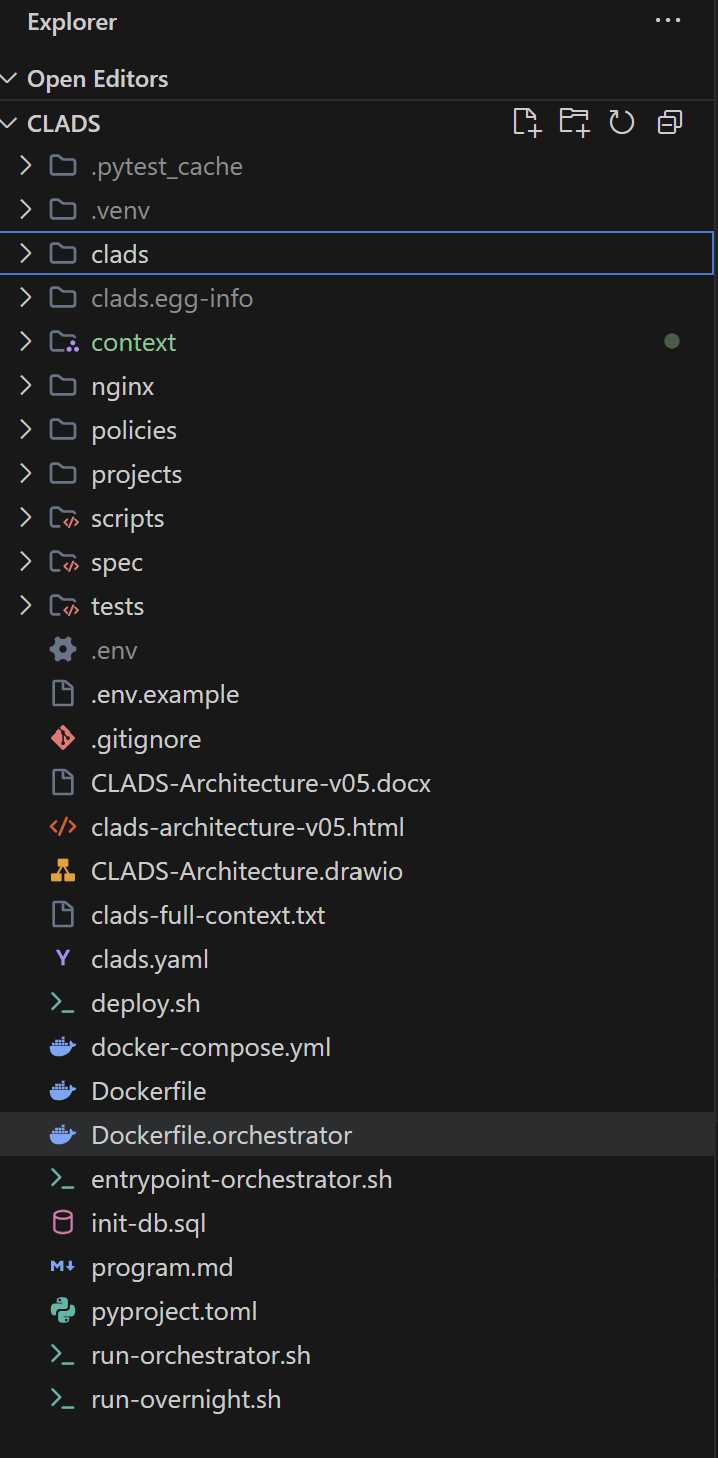

The Karpathy moment

But recently Andrej Karpathy published his autoresearch work - an AI agent running ML experiments autonomously overnight. The setup was almost offensively simple. A single instruction file. One target the agent could modify. One metric. A rule: if the score improves, commit; if not, reset and try again. You can find it at his GitHub repository.

The agent ran all night. Karpathy reviewed the results in the morning.

No orchestration framework. No multi-agent graph. No elaborate infrastructure. Just a tight loop - spec, execute, measure, commit or reset - running until the human woke up and looked at the log.

That landed differently for me than most AI research does. Not because of the AI part. Because of the factory part. A fixed instruction set. A single quality metric. A commit-or-scrap gate. That's not a research innovation. That's a production line.

Anyone who has set up a manufacturing cell recognises the pattern. Work instruction goes in. Machine executes. Inspector checks. If it passes, the part moves forward. If not, it goes back or gets scrapped. The machine doesn't argue. Doesn't improvise. Doesn't decide the spec was probably wrong.

Karpathy wasn't building an AI researcher. He was building a lights-out cell. And it clicked - that's what my three builds had been missing. Not better agents. Better factory architecture around the agent.

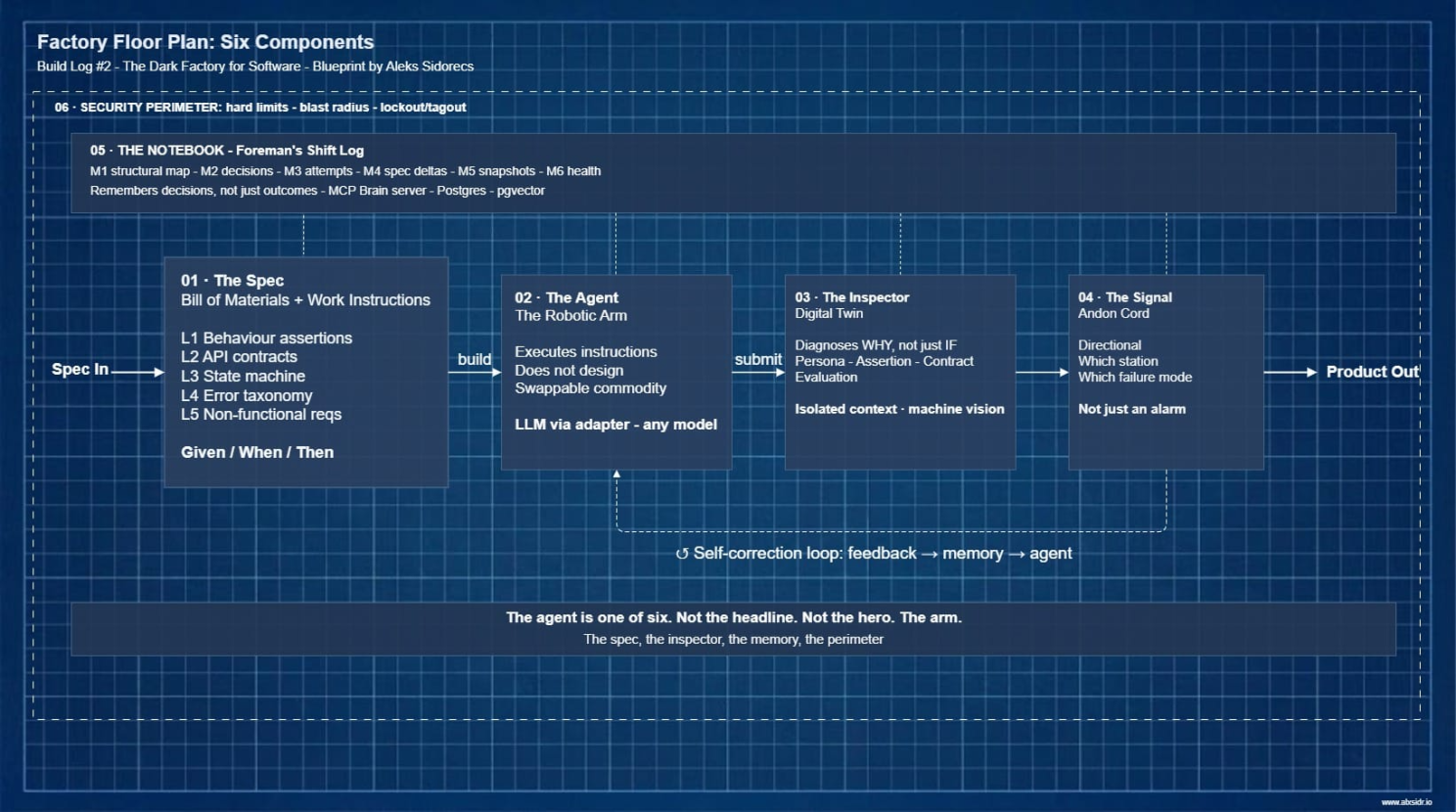

What a dark factory actually is

Supply chain professionals know this term. A dark factory - lights-out manufacturing - is a production facility that runs without human presence on the floor. Tesla's Gigafactory runs production lines where robots stamp, weld, and assemble battery packs and vehicle bodies around the clock - the alien dreadnought vision that Musk pitched in 2018, now quietly operational across multiple plants. Philips runs razor production in the Netherlands with 128 robots and fewer than 10 people on site.

The name is literal. Nobody there, nobody needs the lights on.

But here's what the headlines always leave out. Most lights-out factories are actually lights-sparse. One cell runs unattended. One shift operates autonomously. The rest still has people doing what machines can't: handling exceptions, adjusting to product variation, making the judgment calls that nobody thought to encode in the work instructions.

The honest version of the dark factory is not that it is without humans. It is humans in the control room instead of on the floor. The architect, not the operator.

That distinction is everything for what follows.

The artisan problem

Reflecting on those three builds, the pattern was obvious - and uncomfortable.

Sonar is handcrafted. Five agents wired together manually, tested against scenarios from two decades of commodity shipping, because the AI has no idea what a normal transit deviation looks like for cocoa out of Abidjan versus sugar out of Santos. The Control Tower is the same - one module is being build from scratch, four more designed on paper, each one requiring the same ground-up construction. Smart Eye pushed into hardware, but the AI layer is still artisan: custom prompts, custom logic, custom everything.

Domain knowledge is the real engine in every case. The AI is the wrench. Twenty years of supply chain operations make the agents work.

But the wrench got all the attention. Every build log, every LinkedIn post, every conversation started with "I used Claude to..." or "the AI agent monitors..." as if the agent was the protagonist. It wasn't. The specification was. The data model was. The alert logic was. The agent is a commodity - replaceable, swappable, increasingly interchangeable.

That reframing hit hard. Not because it diminished what the agents did. Because it elevated everything else. The spec, the inspection logic, the memory, the safety perimeter - those are the hard parts. Those are the parts that don't exist in anyone's weekend tutorial. And those are the parts I was rebuilding from scratch every single time.

The Control Tower has five modules. One is being built. If the next four require the same artisan process - the same ground-up wiring, the same blank canvas, the same knowledge locked in my head instead of in a system - they'll take five times the effort. That doesn't scale. Not for the Control Tower. Not for the next product after that.

One artisan, one workbench, one product. Beautiful results. Does not scale.

Six things every dark factory needs

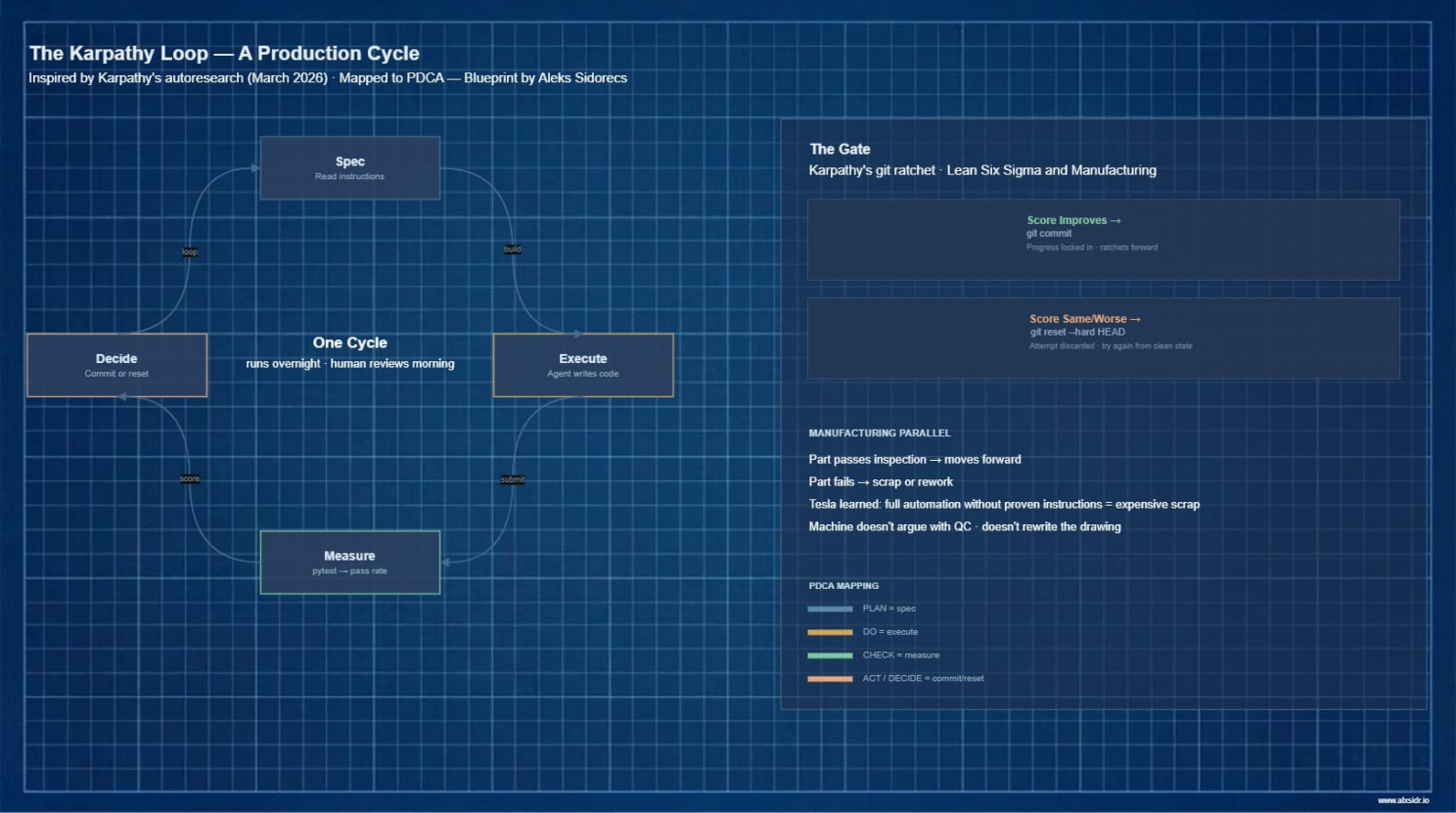

When I stopped looking at the agents and started looking at the factory, the same six components appeared in every build - whether I'd designed them deliberately or stumbled into them by instinct.

The specification. Manufacturing calls it the bill of materials plus work instructions. Without it, the robot doesn't know what to produce. Without it, the AI doesn't know what counts as an anomaly versus Tuesday. Every agent I build got a spec. It is just locked inside my head instead of written somewhere the next project could inherit.

The agent. The robotic arm. Executes instructions. Does not design the product. Least interesting component in the factory and the one that gets the most airtime. Swappable. Any capable arm will do.

The inspector. In a dark factory, quality inspection uses machine vision - not just pass or fail but: this weld is 2mm off on the left seam, likely fixture drift on station 3. The software equivalent is a testing system that diagnoses why something failed, not just that it did. Most agent builds have tests. Very few have diagnosis.

The signal. Manufacturing calls it the andon cord. Not just an alarm - a directional signal. Which station, which part, which failure mode. "Test failed" is useless. "Ownership check missing in the canvas repository, line 47" is actionable.

The notebook. The foreman's shift log. What was tried. What worked. What was rejected, and why. In supply chain operations, tribal knowledge walks out the door when experienced people leave. The memory layer is the documentation that prevents knowledge loss between sessions. Not a summary - summaries lose precision exactly when precision matters most. A structured record that remembers decisions, not just outcomes.

The perimeter. Safety systems in automated factories exist not because the robot is malicious. The robot is powerful, fast, and has no understanding of context. Light curtains. Emergency stops. Lockout/tagout. Hard limits the machine cannot cross regardless of what the programming says. The software equivalent: boundaries on what the agent can touch, which files it can modify, which systems it can reach. The agent isn't trying to corrupt your production database. It just doesn't know it shouldn't.

Six components. Same six every time. Whether you're building maritime intelligence with Sonar, anomaly detection in a Control Tower, or inventory recognition with Smart Eye.

The agent is one of the six. Not the headline. Not the hero. The arm.

Why the lights aren't off yet

The architecture for this software factory exists. The six components are designed. The bootstrapping sequence follows the same discipline any supply chain professional would use to stage a warehouse automation rollout: one cell first, prove it, gate it, expand.

And the work has started. The first spec clauses are written. The first cycle is queued. The ratchet is ready to run.

But the lights aren't off yet. Tesla didn't start with the alien dreadnought. They started with one automated cell - stamping, then welding, then paint. Proved each station could run unattended. Learned the hard way that full automation without proven work instructions produces expensive scrap. Then they added the next cell. Then the next. The Gigafactory today is lights-sparse: heavily automated production with humans where judgment still matters.

The gates are measurable. Not vibes. The cell doesn't graduate until fifty cycles pass with quality above eighty percent. The second product line doesn't start until the first is at full pass rate. Overnight unattended runs for a full week before the orchestration layer expands.

As practitioners we recognise this. Pilot-then-scale. Phased rollout. The exact same governance you'd apply to a new WMS deployment or an ERP migration. The rigour isn't optional - it's what separates a factory from a hobby.

The four remaining Control Tower modules. The next version of Smart Eye. Whatever comes after that. All of them should come off the same production line, built from a spec, inspected by a twin, remembered by a notebook, bounded by a perimeter.

I'll keep posting how the factory actually performs against those real projects. Not the architecture. The results. How many cycles ran overnight. What the pass rate looked like in the morning. Where the spec was too vague and the agent built the wrong thing correctly. Where the ratchet worked and where it didn't.

The lights go off on the factory floor. The control room stays lit and CLADS (Closed Loop Autonomous Development System) uploaded to my GitHub and is ready for deployment to my VPS. Experiment continues.